Keyword Tax

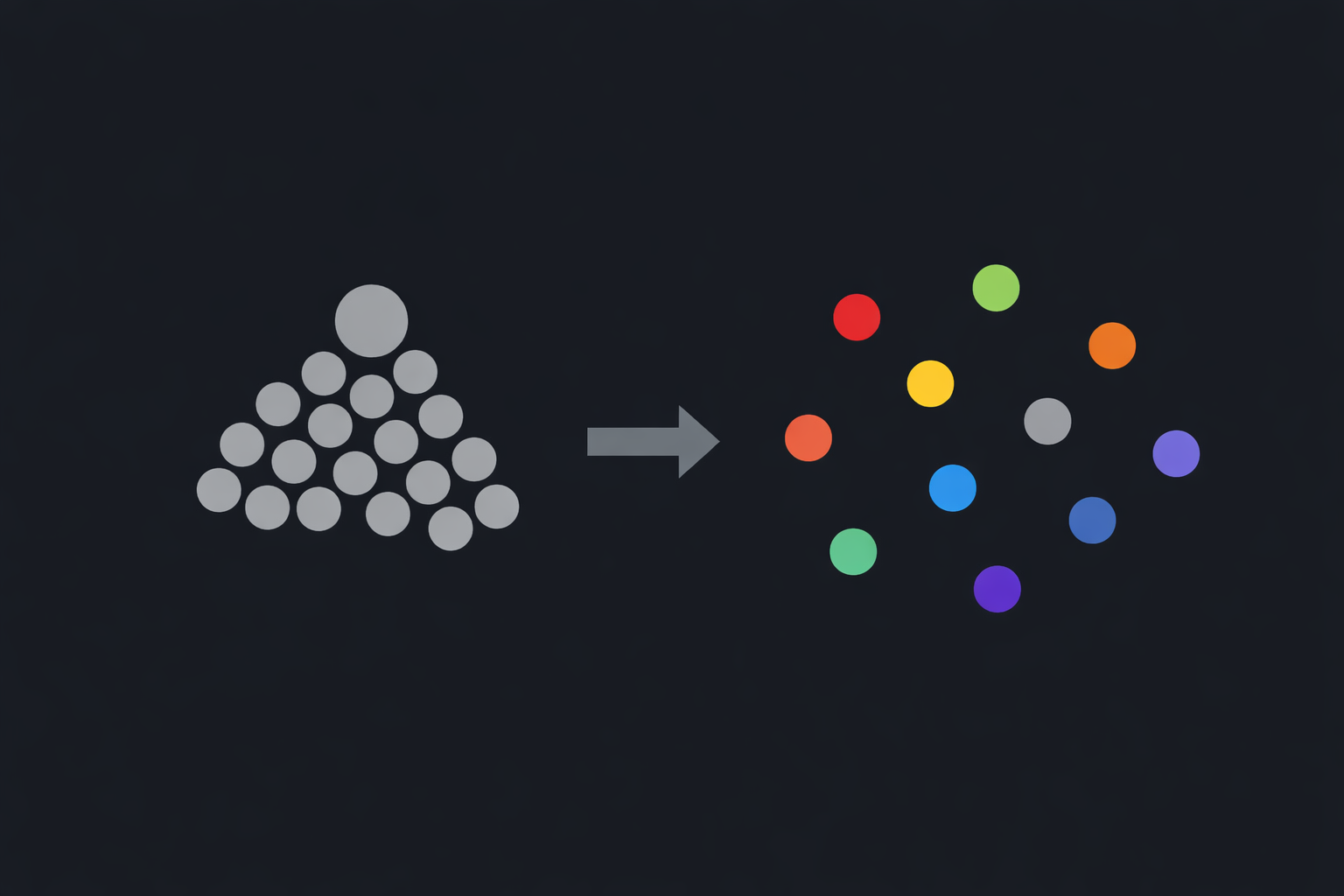

Keywords herd traffic into a narrow, dense auction. Five physical therapists — a climbing specialist, a pelvic floor specialist, a pediatric specialist, a sports rehab specialist, and a generalist — all bid on “physical therapy.” The auction can’t tell them apart. Each bin has 4–5 bidders fighting over every query, pushing clearing prices up and extracting maximum revenue. This is what the publisher wants.

Levin & Milgrom (2010) call this conflation: pooling heterogeneous items into one auction. The auction mechanism is efficient (GSP works); the item definition is not. The climbing PT pays to compete on “pelvic floor exercises after C-section.” The pelvic floor PT pays to compete on “finger pulley injury from rock climbing.” Neither will convert on the other’s queries, but the keyword bin forces them into the same auction anyway. That’s the keyword tax.

Can embedding auctions fix it? We built both auction types and ran 50 randomized trials.

The Simulation

Fifteen advertisers compete across 62 queries in a high-dimensional embedding space using real embeddings from BGE-small-en-v1.5:

- Physical therapy (5): climbing PT, sports PT, pelvic floor PT, pediatric PT, general PT

- Fitness coaching (4): climbing coach, running coach, CrossFit coach, personal trainer

- Nutrition (4): sports dietitian, gut health specialist, weight loss coach, general nutritionist

- Tutoring (2): ADHD math tutor, general math tutor

Eleven are specialists; four are generalists who compete broadly. Specialists have narrow targeting radius (σ=0.30) and high max value (12.0) reflecting their conversion advantage. Generalists have wide radius (σ=0.55) and moderate max value (6.0). The 2x ratio is an assumption, but a climber who finds the climbing PT books follow-up sessions and refers other climbers, while a climber who finds a general PT might not come back.

Two auction mechanisms:

- Keywords. Queries binned by cluster. Only advertisers in the matching cluster compete, on price alone. Today’s market.

- Embeddings. Full

log(price) - dist²/σ²scoring. Agents optimize bid, position, and σ. Tested with and without relocation fees penalizing drift from committed position.

The simulation code is open source.

Part 1: Would a Monopolist Switch?

What happens if a single publisher replaces their keyword auction with an embedding auction?

| Metric | Keywords | Embeddings | Effect |

|---|---|---|---|

| Value efficiency | 0.858 ± 0.015 | 0.793 ± 0.032 | lower ¹ |

| Win diversity | 0.809 ± 0.016 | 0.833 ± 0.049 | higher ² |

| Specialist surplus | -0.807 ± 0.280 | -0.695 ± 0.329 | inconclusive |

| Generalist surplus | -0.233 ± 0.411 | -0.022 ± 0.129 | higher ² |

| Avg surplus/round | -0.654 ± 0.200 | -0.516 ± 0.238 | higher ² |

| Publisher revenue/round | 79.39 ± 1.72 | 72.82 ± 2.59 | lower ¹ |

50 trials per cell. Welch’s t-test: ¹ p<0.001, ² p<0.01.

Publisher revenue drops 8%, from 79.39 to 72.82 (p<0.001). That surplus goes to the advertisers. Generalists benefit most, going from -0.233 to nearly break-even at -0.022. Specialist surplus improves directionally (-0.807 to -0.695) but doesn’t reach significance; more on that below.

The keyword tax, measured

Specialists lose 3.5x more than generalists (-0.807 vs -0.233 per round). The conflation penalty is visible in the data: specialists pay to compete on queries they can’t convert.

What embeddings fix

Embeddings move surplus from the publisher to the advertisers. Win diversity improves from 0.809 to 0.833 (p<0.01), so the consumer who searches “finger pulley injury from rock climbing” finds the climbing PT, not whoever bid highest on “physical therapy.”

Specialist surplus improves directionally (-0.807 to -0.695, ns) but specialists are still losing money. Without relocation fees, advertisers drift toward popular queries and the niche advantage erodes.

Adding relocation fees changes the picture. Specialist surplus goes from -0.695 to +0.021 (p<0.001). Specialists make money. Fees pin them at their niche, and their conversion advantage translates into winning their best queries at prices that leave positive surplus. Win diversity climbs further to 0.876. Value efficiency recovers to 0.834, within 2.4pp of keywords.

Why a monopolist wouldn’t switch

Dense keyword auctions extract more rent. The publisher’s optimal auction is α = 0: no distance weighting, everyone competes everywhere. That’s keywords.

This is already visible in practice: Google keeps expanding broad match and deprecating exact match, making keyword bins vaguer and auctions denser. Athey & Gans (2010) showed theoretically why: better targeting disproportionately benefits large general-audience platforms, which can segment audiences to match what niche publishers offer naturally. The dominant platform’s rational move is to keep targeting coarse enough that its breadth advantage holds. In a recent industry poll, more than 50% of respondents said small businesses have been priced out of Google Ads entirely.

A monopolist publisher would not adopt embedding auctions. Specialists have no outside option: they either pay the keyword tax or don’t advertise.

Part 2: What If a Competitor Offers Embeddings?

If a competitor offers embedding auctions, every participant can compare their surplus:

| Participant | Keywords (incumbent) | Embeddings + fees (competitor) | Switching gain |

|---|---|---|---|

| Specialists (11) | -0.807/round | +0.021/round | +0.828 |

| Generalists (4) | -0.233/round | -0.052/round | +0.181 |

| Publisher | 79.39/round | 67.23/round | -12.16 |

Specialists gain +0.828 per round, from losing money to making money. That’s the switching incentive that bootstraps the competing exchange. Generalists gain too, but specialists gain 4.6x more and outnumber generalists 11 to 4.

The distribution moat

But advertisers go where the users are. No queries, no auction.

Google pays Apple over $18 billion a year to be the default search engine on Safari. Queries flow to keyword auctions because users flow to Google, and users flow to Google because of a default payment that costs more per year than most companies are worth.

The keyword equilibrium is stable because the moat is expensive to cross.

Where the queries are going

AI chatbots already have the traffic. They just can’t monetize it.

Perplexity, ChatGPT, Claude, Gemini: millions of queries, burning inference costs, no ad revenue. These queries are conversational: “my finger hurts after a climbing session, should I see a physical therapist?” There’s no keyword to bid on when the query is a paragraph. But it maps to the climbing PT’s position in embedding space.

Meanwhile, search advertising is shrinking. As covered in The Convergence, organic CTR dropped 65% and paid CTR fell 68% on queries where AI Overviews appeared. Specialists paying the keyword tax are the first to get priced out, and the chatbots already have the queries they’d pay to reach. The connection is missing.

The missing piece

The long tail of chatbot platforms (vertical assistants, domain-specific tools, niche communities) doesn’t exist yet, because there’s no way to monetize conversational traffic. An embedding auction that works across platforms would let any chatbot with a focused query stream sell ad inventory. The equilibrium is thousands of small platforms, connected by a shared auction layer.

Whether embedding auctions generate enough revenue per query to offset inference costs is open. Perplexity tried keyword-style ads: $20K in total revenue. OpenAI projects $1B in 2026 ads against $14B in inference costs. Current ad formats are a poor fit for conversational queries. Embedding auctions might change the yield, but that’s speculation, not measurement.

The climbing PT who’s losing -0.807 per round on Google keywords will buy ads on the platform where her niche queries exist, at prices where she breaks even. The keyword incumbent can’t replicate this without cannibalizing their own revenue.

What ships first will be wrong

The likely first implementation is RAG-based ad selection: embed the query, find the nearest ad embeddings, show the top results. This is already how these platforms retrieve context. Extending it to ads is a small step.

But RAG selection is a Voronoi diagram: equal radius for every advertiser, no price integration, and no way to control competitive radius. Better resolution, same structural flaw.

Whoever ships RAG ad selection first will discover what the simulation already shows: without per-advertiser σ, price-integrated scoring, and drift penalties, the embedding auction degrades. Positions drift toward popular queries and aggressive bids override relevance. They’ll patch in radius control, then scoring, then fees. They’ll reinvent the power diagram auction incrementally.

The Caveats

The 2x specialist conversion advantage drives the surplus story. At 1.25x (v3.3), all effects shrank to non-significance. The mechanism works at any ratio (specialists always do directionally better with embeddings) but whether the effect is significant depends on how much better specialists actually convert. The argument is strongest for deep niches with high repeat-customer value.

The marketplace wedge doesn’t require the simulation’s exact numbers. It requires that AI platforms need ad revenue (inference costs aren’t free) and that some specialists are losing enough on keywords to buy ads elsewhere. Whether the first embedding auction emerges from an open protocol or a startup building the SSP layer, that won’t be answered by simulation.

Part of the Vector Space series. Written with help from Claude Opus 4.6.